|

Home

People

Research

Publications

Resources

Related Links

|

|

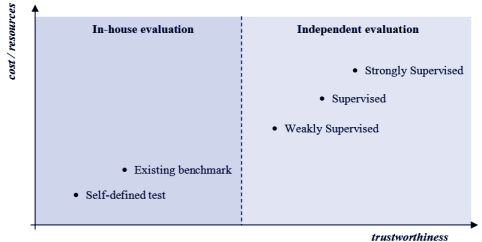

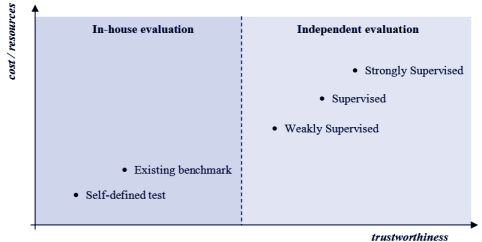

Performance EvaluationBiometric performance evaluations can be classified into technology, scenario and operational evaluations. Technology evaluations test computer algorithms with archived biometric data collected using a "universal" (algorithm-independent) sensor; Scenario evaluations test biometric systems placed in a controlled, volunteer-user environment modeled on a proposed application; Operational evaluations attempt to analyze performance of biometric systems placed into real applications. Tests can also be characterized as on-line or off-line, depending upon whether the test computations are conducted in the presence of the human user (on-line) or after-the-fact on stored data (off-line). An off-line test requires a pre-collected database of samples and makes it possible to reproduce the test and to evaluate different algorithms under identical conditions. Off-line tests can be classified as follows: - In-house - self defined test: the database is internally collected and the testing protocol is self-defined. Generally the database is not publicly released, perhaps because of human-subject privacy concerns, and the protocols are not completely explained. As a consequence, results may not be comparable across such tests or reproducible by a third party.

- In-house - existing benchmark: the test is performed over a publicly available database, according to an existing protocol. Results are comparable with others obtained using the same protocol on the same database. Besides the trustworthiness problem, the main drawback is the risk of overfitting the data - that is, tuning the parameters of the algorithms to match only the data specific to this test.

- Independent - weakly supervised: the database is sequestered and is made available just before the beginning of the test. Samples are unlabelled (the filename does not carry information about the sample’s owner identity). The test is executed at the testee’s site and must be concluded within given time constraints. Results are determined by the evaluator from the comparison scores obtained by the testee during the test. The main criticism against this kind of evaluation is that it cannot prevent human intervention: visual inspection of the samples, result editing, etc., could in principle be carried out with sufficient resources.

- Independent - supervised: this approach is very similar to the independent weakly supervised evaluation but here the test is executed at the evaluator’s site on the testee’s hardware. The evaluator can better control the evaluation but: i) there is no way to compare computational efficiency (i.e., different hardware systems can be used); ii) some interesting statistics (e.g., template size, memory usage) cannot be obtained; iii) there is no way to prevent score normalization and template consolidation (i.e., techniques where information from previous comparisons is unfairly exploited to increase the accuracy in successive comparisons).

- Independent - strongly supervised: data are sequestered and not released before the conclusion of the test. Software components compliant to a given input/output protocol are tested at the evaluator’s site on the evaluator’s hardware. The tested algorithm is executed in a totally-controlled environment, where all input/output operations are strictly monitored. The main drawbacks are the large amount of time and resources necessary for the organization of such events. The Fingerprint Verification Competitions (FVC), co-organized by BioLab, are examples of independent - strongly supervised evaluations.

Bibliography(Click here if you are interested in any of the publications below) | D. Maltoni, D. Maio, A.K. Jain and J. Feng, Handbook of Fingerprint Recognition (Third Edition), Springer Nature, 2022. |  | M. Ferrara, Biometric Fingerprint Recognition Systems, Lambert Academic Publishing, 2010. |  | D. Maltoni, D. Maio, A.K. Jain and S. Prabhakar, Handbook of Fingerprint Recognition (Second Edition), Springer (London), 2009. |  | J.L. Wayman, A.K. Jain, D. Maltoni and D. Maio, Biometric Systems - Technology, Design and Performance Evaluation, Springer, 2005. |  | D. Maio, D. Maltoni, R. Cappelli, J.L. Wayman and A.K. Jain, "Technology Evaluation of Fingerprint Verification Algorithms", in J.L. Wayman, A.K. Jain, D. Maltoni, D. Maio, Biometric Systems - Technology, Design and Performance Evaluation, Springer, 2005.  Abstract Abstract |  | K. Raja, M. Ferrara, A. Franco, L. Spreeuwers, I. Batskos, F. De Wit, M. Gomez-Barrero, U. Scherhag, D. Fischer, S. Venkatesh, J. M. Singh, G. Li, L. Bergeron, S. Isadskiy, R. Raghavendra, C. Rathgeb, D. Frings, U. Seidel, F. Knopjes, R.J. Veldhuis, D. Maltoni and C. Busch, "Morphing Attack Detection - Database, Evaluation Platform and Benchmarking", IEEE Transactions on Information Forensics and Security, vol.16, pp.4336-4351, November 2020.  Abstract Abstract |  | M. Ferrara, A. Franco, D. Maio and D. Maltoni, "FICV: a new FVC-onGoing benchmark area on Face Compliance Verification to ISO/IEC 19794-5:2011 standard", EAB Newsletter, no.1, pp.15-17, April 2014. |  | M. Ferrara, A. Franco, D. Maio and D. Maltoni, "Face Image Conformance to ISO/ICAO standards in Machine Readable Travel Documents", IEEE Transactions on Information Forensics and Security, vol.7, no.4, pp.1204-1213, August 2012.  Abstract Abstract |  | R. Cappelli, M. Ferrara, A. Franco and D. Maltoni, "Fingerprint verification competition 2006", Biometric Technology Today, vol.15, no.7-8, pp.7-9, August 2007.  Abstract Abstract |  | R. Cappelli, D. Maio, D. Maltoni, J.L. Wayman and A.K. Jain, "Performance Evaluation of Fingerprint Verification Systems", IEEE Transactions on Pattern Analysis Machine Intelligence, vol.28, no.1, pp.3-18, January 2006.  Abstract Abstract |  | D. Maio, D. Maltoni, R. Cappelli, J.L. Wayman and A.K. Jain, "FVC2000: Fingerprint Verification Competition", IEEE Transactions on Pattern Analysis Machine Intelligence, vol.24, no.3, pp.402-412, March 2002.  Abstract Abstract |  | M. Golfarelli, D. Maio and D. Maltoni, "On The Error-Reject tradeoff in Biometric Verification Systems", IEEE Transactions on Pattern Analysis Machine Intelligence, vol.19, no.7, pp.786-796, July 1997.  Abstract Abstract |  | R. Cappelli, M. Ferrara, D. Maltoni and F. Turroni, "Fingerprint Verification Competition at IJCB2011", in proceedings International Joint Conference on Biometrics (IJCB11), Washington DC, October 2011.  Abstract Abstract |  | R. Cappelli, M. Ferrara, D. Maltoni and M. Tistarelli, "MCC: a Baseline Algorithm for Fingerprint Verification in FVC-onGoing", in proceedings 11th International Conference on Control, Automation, Robotics and Vision (ICARCV), Singapore, December 2010.  Abstract Abstract |  | R. Cappelli, D. Maltoni and F. Turroni, "Benchmarking Local Orientation Extraction in Fingerprint Recognition", in proceedings 20th International Conference on Pattern Recognition (ICPR2010), Istanbul, pp.1144-1147, August 2010.  Abstract Abstract |  | R. Cappelli and D. Maltoni, "FVC-onGoing: On-line Evaluation of Fingerprint Recognition Algorithms", in proceedings International Biometric Performance Conference (IBPC2010), Gaithersburg, Maryland, March 2010. |  | B. Dorizzi, R. Cappelli, M. Ferrara, D. Maio, D. Maltoni, N. Houmani, S. Garcia-Salicetti and A. Mayoue, "Fingerprint and On-Line Signature Verification Competitions at ICB 2009", in proceedings International Conference on Biometrics (ICB), Alghero, Italy, pp.725-732, June 2009.  Abstract Abstract |  | R. Cappelli, D. Maio and D. Maltoni, "Technology Evaluations of Fingerprint-Based Biometric Systems", in proceedings 12th European Signal Processing Conference (EUSIPCO2004), Vienna, Austria, pp.1405-1408, September 2004. Invited paper.  Abstract Abstract |  | D. Maio, D. Maltoni, R. Cappelli, J.L. Wayman and A.K. Jain, "FVC2004: Third Fingerprint Verification Competition", in proceedings International Conference on Biometric Authentication (ICBA04), Hong Kong, pp.1-7, July 2004.  Abstract Abstract |  | R. Cappelli, "FVC: Fingerprint Verification Competitions", in proceedings Second BSI-Symposium on Biometrics 2004, Darmstadt (Germany), pp.56-63, March 2004.  Abstract Abstract |  | D. Maio, D. Maltoni, R. Cappelli, J.L. Wayman and A.K. Jain, "FVC2002: Second Fingerprint Verification Competition", in proceedings 16th International Conference on Pattern Recognition (ICPR2002), Québec City, vol.3, pp.811-814, August 2002.  Abstract Abstract |  | R. Cappelli, D. Maio and D. Maltoni, "Indexing Fingerprint Databases for Efficient 1:N Matching", in proceedings Sixth International Conference on Control, Automation, Robotics and Vision (ICARCV2000), Singapore, December 2000. Invited Paper.  Abstract Abstract |  | D. Maio, D. Maltoni, R. Cappelli, J.L. Wayman and A.K. Jain, "FVC2000: Fingerprint Verification Competition", Technical Report, DEIS - University of Bologna, September 2000.  Abstract Abstract |

|